What is the Best Way to Store and Process Big Data?

What is the Best Way to Store and Process Big Data?

A data lake is a common place to store big data. Data warehouses are often built using relational databases and only contain structured data.

However, data lakes can store a variety of data types. They are typically based on Hadoop Clusters, cloud object storage, and other big data platforms, such as NoSQL tables or other big data platforms.

Big data environments often combine many systems in a distributed architecture.

For example, a central database might be integrated with relational databases and a data warehouse. In addition, big data systems can store data in raw form, which may then be filtered and organised according to specific analytics needs.

Other cases may require that the data be preprocessed with data mining software and data preparation software to make it ready for regular applications.

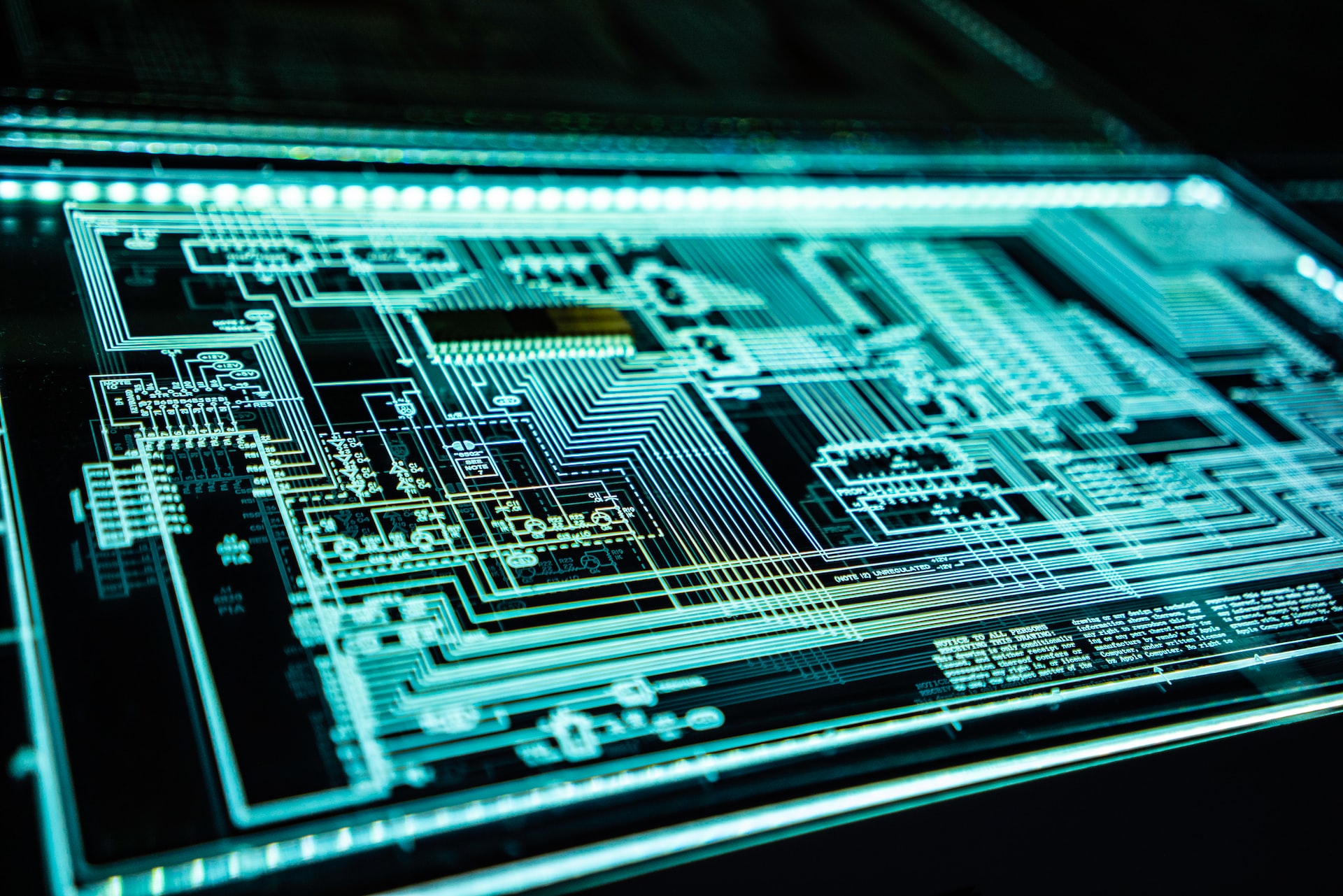

The underlying infrastructure for computing power is required to handle big data processing.

Clustered systems, which distribute processing workloads across thousands or hundreds of commodity servers using technologies such as Hadoop and Spark, provide the required computing power.

It isn't easy to get that much processing power cost-effectively.

The cloud is becoming a very popular place for big data systems.

Organisations can deploy their cloud-based systems or use managed big-data-as-a-service offerings from cloud providers.

Cloud users can increase the servers needed to complete large data analytics projects. The company only has to pay for the computing and storage time used. Cloud instances can also be shut down until needed.

How big data analytics works

Data scientists and other analysts need to have a deep understanding of the data available and an idea of what they are looking for.

Data preparation is a critical first step in any analytics process. It includes data cleansing, validation, transformation, and profiling.

After the data is gathered, it can analyse different applications using advanced analytics tools. These include predictive modeling, machine learning, and its deep learning offshoot.

As an example, let's take customer data and show you the various branches of analytics that can use big data:

- Comparative analysis. This analyses customer behaviour and real-time engagement to determine if a company's products and services are comparable with its competitors.

- Social media listening. This analyses the social media conversations about a product or business to help target customers and identify potential issues.

- Marketing analytics. This provides information that can help improve marketing campaigns and promote products, services, and business initiatives.

- Sentiment analysis. Customers can use their data to analyse how they feel about a company, brand, and potential problems.

Big data challenges

Designing a big-data architecture is often challenging concerning processing capacity issues. Therefore, the design of big data systems should be customised to the needs of each organisation.

This DIY project requires IT and data managers to create custom technologies and tools. In addition, the management and deployment of big data systems requires new skills than those required by database administrators, or developers who work with relational software.

These issues can be solved by using managed cloud services. However, IT managers must monitor cloud usage to ensure that costs are manageable. In addition, it can be difficult to migrate data sets from on-premises and processing workloads into the cloud.

Another challenge in managing big data systems is making data available to data scientists and analysts in distributed environments with various data stores and platforms.

Data management and analytics teams are increasingly creating data catalogs that include metadata management and data lineage function to aid analysts in finding relevant data.

However, integrating big data sets is often complicated, especially when there are many variables like data velocity and variety.

How to create a big data strategy that works

Developing a big-data strategy in an organisation requires an understanding and assessment of the business goals, data available, and data needed to meet those objectives.

Next, you need to consider the following:

- Prioritising applications and use cases planned is key.

- Identifying new systems and tools that are required.

- A deployment roadmap is created.

- They are assessing internal skills to determine if retraining and hiring are necessary.

A data governance programme and the associated quality management processes are essential to ensure that big data sets are consistent, clean, and properly used.

Another best practice for managing and analysing big data is to focus on business requirements and use data visualisation to assist in data discovery and analysis.

Workers are responsible for analysing and managing the data, and they know the value of bigdata initiatives for their businesses. In addition, there are tools that require less technical users to run predictive analytics apps or help businesses set up the infrastructure needed for big data projects.

Does your organisation have a data strategy in place?

Need support with data organisation? Contact EWM today to discuss your needs.